The Ultimate Guide to Entropy and the Arrow of Time: Unlock the secrets of the universe’s ultimate driving force. Discover how **Entropy** explains everything from the Big Bang to your daily productivity slump, giving time its one-way direction. This deep dive into the Second Law is essential reading for every curious mind.

Have you ever noticed how easy it is for things to fall apart, but incredibly hard to put them back together? I mean, really think about it. That coffee mug you accidentally dropped? It shatters instantly. But try to collect all those tiny pieces and spontaneously reform the mug. Impossible, right? Or how about your desk? You clean it meticulously, and yet, within a week, it seems to have magically descended into chaos. 😊

It’s not bad luck or a personal failing; it’s a fundamental law of the universe at work: **Entropy**. It’s arguably the most profound, yet least understood, concept in science. When we talk about physics, most people focus on energy, but the true master architect of change isn’t the *amount* of energy, but its *quality*, or rather, its tendency to spread out. This spreading out is what gives our universe a direction—what physicists call the **Arrow of Time**.

In this deep dive, we’re not just defining terms. We’re exploring why the universe has a past and a future, how life itself accelerates this process, and critically, how understanding **Entropy and the Arrow of Time** can actually make you more productive. Ready to look under the hood of reality? Let’s go!

Article Contents 📝

- 1. The Unstoppable March: Understanding Entropy and the Second Law

- 2. Boltzmann’s Revelation: Entropy as a Game of Probability

- 3. The Universe’s Driver: Entropy’s Connection to the Arrow of Time

- 4. Life’s Paradox: How Living Systems Increase Global Entropy

- 5. Applying the “Arrow of Time” to Daily Life and Productivity

- 6. Frequently Asked Questions (FAQ)

1. The Unstoppable March: Understanding Entropy and the Second Law 🚀

The formal concept of **entropy** emerged not from cosmic philosophy, but from a very practical, 19th-century industrial problem: steam engine efficiency. Sadi Carnot, a French engineer, theorized about the ideal heat engine. This is where the core realization of the Second Law came from.

The Core Concept: Why Energy Spreads Out

The First Law of Thermodynamics says energy is conserved—it can’t be created or destroyed. Great! But the Second Law adds a crucial, almost philosophical layer: energy, while conserved in *quantity*, decreases in *quality*. When you burn fuel, the energy doesn’t vanish, it just turns into heat and spreads out into the atmosphere. Once it’s spread out, you can’t easily gather it back to run an engine. That dispersed, ‘useless’ energy is the increase in **entropy**.

💡 Key Insight!

**Entropy** is the measure of the **unavailability** of energy for doing useful work. Think of it as the degree of energy dispersal in a system. When energy is concentrated (like a hot battery), entropy is low; when it’s spread evenly (room temperature), entropy is high.

Carnot’s Revelation: The Efficiency Limit of Heat Engines

Carnot showed that even an *ideal* engine cannot convert 100% of heat into work. Why? Because to complete the cycle and return the piston to its starting point, the engine must exhaust some heat to a colder reservoir. This waste heat is unavoidable and represents the minimum increase in **entropy** required for the process to occur. This limitation is what defines the Second Law in engineering.

| Thermodynamic Law | Core Principle & Connection to Entropy |

|---|---|

| First Law | Energy is conserved (Total energy remains constant). |

| Second Law | Entropy of a closed system always increases (The *quality* of energy degrades, giving rise to the **Arrow of Time**). |

2. Boltzmann’s Revelation: Entropy as a Game of Probability 🎲

For decades, **entropy** was just a concept tied to heat and engineering. Then came Ludwig Boltzmann, who introduced statistical mechanics and transformed **entropy** from a messy engineering concept into a beautiful statement about probability. This is where things get truly mind-blowing, trust me.

Disorder vs. Likelihood: Counting the Configurations

We often define **entropy** as ‘disorder,’ but Boltzmann reframed it as the number of ways a system can be arranged while still looking the same macroscopically. Imagine a box with two compartments: if all gas molecules are in the left side (low **entropy**), there’s only *one* way for that to happen. But if they’re spread evenly (high **entropy**), there are millions of possible arrangements for the individual molecules. The universe isn’t *seeking* disorder; it’s simply moving toward the state that has the highest mathematical probability of existing.

Macrostates and Microstates: The Statistical Mechanics of Entropy

Boltzmann’s famous equation, inscribed on his tombstone, links the macroscopic property of **entropy** ($S$) to the microscopic probability ($W$, the number of microstates):

The Boltzmann Equation 📝

$S = k \ln W$

- $S$: Entropy

- $k$: Boltzmann constant (a fundamental constant)

- $W$: The number of microstates (arrangements) corresponding to the observed macrostate.

The equation simply states: The more ways there are for a system to be configured, the higher its **entropy** is. And since there are vastly more disordered configurations than ordered ones, disorder is the most likely outcome.

3. The Universe’s Driver: Entropy’s Connection to the Arrow of Time 🕰️

This is the big one. If the laws of physics are reversible (and most are), why does time only move forward? Why can’t we ‘un-cook’ an egg? The answer lies entirely in the Second Law and the concept of the **Arrow of Time**.

Why Time Doesn’t Run Backward: The Non-Reversible Nature of Change

Any process that increases the total **entropy** of the universe is fundamentally irreversible. Smashing the coffee mug? That’s a massive, irreversible **entropy** increase. The particles and heat disperse. The universe moves from a highly specific, low-probability state (intact mug) to a high-probability state (shattered pieces). Reversing that process would require a massive, spontaneous decrease in entropy, which is statistically impossible on a macroscopic scale.

Physicist Arthur Eddington coined the term **”Arrow of Time“** in 1927, noting that **entropy** is the only physical quantity that constantly increases in time, thus providing the necessary directionality for our perception of past and future. It’s what drives every event we observe, from stars dying to ice melting.

⚠️ A Crucial Distinction!

While local systems (like a refrigerator or a living cell) can *decrease* their internal **entropy**, they can only do so by expending energy and increasing the **entropy** of their surroundings by a greater amount. The Second Law holds true for the universe as a whole.

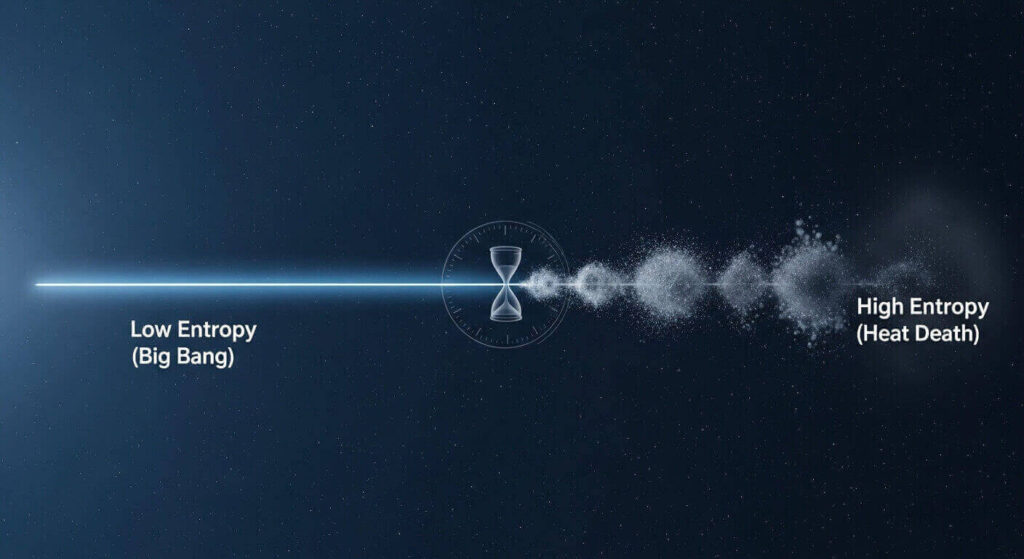

Cosmological Entropy: From the Big Bang to the Heat Death

The fact that the **Arrow of Time** exists implies the universe started in an extremely low **entropy** state. The Big Bang, our cosmic beginning, was a state of high energy concentrated in a tiny volume—incredibly low probability, incredibly low **entropy**. The entire history of the cosmos—the formation of stars, galaxies, and even the simple act of a photon traveling—is just a long, continuous chain of **entropy** increase.

The ultimate end predicted by the Second Law is the Heat Death of the Universe. This isn’t a fiery end, but a state where all energy is spread out perfectly evenly. Temperatures are uniform, no useful work can be done, and the universe has reached its state of maximum **entropy**. At this point, the **Arrow of Time** effectively ceases to exist because no distinguishing, irreversible events can occur.

4. Life’s Paradox: How Living Systems Increase Global Entropy 🌳

When I first learned about **entropy**, I was so confused about life. I mean, life is the definition of *order*. We build complex structures, from DNA to cities. How can we exist in a universe that demands increasing disorder?

The Sun’s Low-Entropy Fuel and Earth’s High-Entropy Output

The solution lies in the fact that Earth is an open system, constantly fed by the sun. The sun sends us low-entropy, high-energy photons. Our planet (and its life) absorbs this energy and then radiates high-entropy, low-energy photons back into space. The total **entropy** generated by this process far outweighs the local decrease in entropy required to form a tree or a human brain. We are only able to create local order by exporting a massive amount of disorder (heat) to the cosmos.

Dissipative Structures: Life as an Entropy Accelerator

Some brilliant minds, like physicist Ilya Prigogine, described living systems as **dissipative structures**. We exist not *despite* the Second Law, but *because* of it. Life is simply a highly efficient mechanism that has evolved to dissipate energy and increase the universe’s total **entropy** at a faster rate than a non-living system would. It’s a beautifully counter-intuitive view: we are the universe’s most complex and efficient tools for disorder production!

5. Applying the “Arrow of Time” to Daily Life and Productivity 🧠

So, what does the impending Heat Death of the Universe have to do with your Monday morning? Honestly, quite a lot. Applying the laws of **Entropy** to your daily processes can be a massive game-changer.

Fighting Mental Disorder: Managing Information Entropy

Information theory and statistical mechanics are deeply linked. Unmanaged information (piles of documents, cluttered emails, messy digital folders) is essentially high-**entropy** information. It requires a huge amount of mental energy and time to sort through, meaning it’s less available for useful ‘work’ (like creative problem-solving). Fighting this inevitable slide into chaos requires continuous input of energy—the organizational system. You need to spend a little energy consistently to keep your mental and physical space low-**entropy**.

The High-Quality Energy Rule: Applying Carnot to Your Workday

Remember Carnot’s engine? It needed a high-temperature source and a low-temperature sink. For you, the worker, your ‘high-temperature source’ is your peak mental energy—that few hours in the morning when you’re freshest and most focused. That’s your low-**entropy** energy.

If you use that peak energy on low-value, repetitive tasks (like answering routine emails), you are wasting high-quality energy on low-quality work. This is like trying to run an engine with only a tiny temperature difference—it’s highly *inefficient* in the thermodynamic sense. Instead, dedicate your low-**entropy** energy to your most complex, high-impact tasks. Save the lower-energy tasks for when your mental energy has inevitably dispersed (higher **entropy**).

💡

The Laws of Entropy: Summary Card

Fundamental Principle: The Second Law mandates that the total **entropy** of an isolated system must increase over time.

The Arrow of Time: This irreversible increase is the physical basis for the **Arrow of Time**, defining the direction from past to future.

Life’s Role (The Paradox): Living systems create local order (low entropy) by generating and exporting a massive amount of global disorder (high entropy), primarily through dissipating solar energy.

Productivity Insight: Treat your peak focus time (low **entropy** energy) as a limited resource. Apply it only to high-leverage, complex tasks to maximize your ‘work’ output.

Physics is not just about the cosmos; it’s about the optimal path forward in your daily life.

Conclusion: Embracing the Universe’s Tendency to Disorder ✨

We’ve taken a massive journey, from the efficiency limits of a steam engine to the ultimate fate of the cosmos. The core takeaway isn’t that everything is doomed to chaos, but that change itself is the result of the universe moving toward more probable states. Entropy isn’t just disorder; it’s the engine of transformation.

Understanding **Entropy and the Arrow of Time** gives you a profound advantage: the ability to recognize which processes require high-energy input to maintain order (your relationships, your health, your professional skills) and which processes are naturally inevitable (your messy closet, your to-do list growing). It teaches us to prioritize our low-entropy energy where it matters most.

What’s your biggest takeaway from the Second Law? I’d love to hear your thoughts or any questions you have about applying this concept. Drop a comment below! 😊

Frequently Asked Questions (FAQ) ❓

Q: Does the existence of life contradict the Second Law of Thermodynamics?

A: Absolutely not! Life does decrease its *local* **entropy** (creating order), but it only achieves this by increasing the **entropy** of its environment by an even greater amount. The total **entropy** of the system (Earth + surroundings) still increases, fully complying with the Second Law.

Q: What is the difference between “Entropy” and “Disorder”?

A: While often used synonymously, **entropy** is more accurately defined as the number of possible microscopic arrangements (microstates) corresponding to a specific macroscopic state. High disorder is simply the most *probable* state because it has the highest number of microstates.

Q: Could the Arrow of Time ever reverse?

A: On a macroscopic scale, no. The **Arrow of Time** is driven by the statistically overwhelming probability of **entropy** increasing. For it to reverse, a massive number of particles would have to spontaneously move toward a low-probability, highly ordered state, which is considered virtually impossible.

Q: How does Entropy relate to Information Theory?

A: They are mathematically linked! Shannon **Entropy** in information theory measures the uncertainty or randomness of a message. A highly entropic (random) message carries less useful information, similar to how thermodynamic **entropy** measures the unavailable, dispersed nature of energy.